To Thrive with AI, Your Team Needs Cultural Resilience

Nine Questions that Determine Your Team's AI Resilience

Mulally Clapped, and Ford Stopped Dying

What Ford’s CEO understood about culture is now the difference between companies that survive AI and companies that quietly fracture.

In 2006, Ford was on track to lose $17 billion. The company was paralyzed by a culture of fear, secrecy, and internal competition. Showing weakness in an executive meeting was historically a fireable offense.

Alan Mulally arrived as CEO and instituted a single ritual: a weekly Business Plan Review. Every executive had to color-code their progress. Green for on track. Yellow for at risk. Red for off-plan with no solution.

For weeks, despite the company hemorrhaging billions, every chart came back green.

Then Mark Fields, head of the Americas, showed a red slide for a major launch delay on the Ford Edge. The room froze. Everyone expected Fields to be terminated on the spot.

Mulally clapped.

He praised Fields for the visibility and asked the room, “Who can help Mark with this?”

That single moment broke Ford’s fragile culture. Executives stopped hiding their failures and started pooling their expertise to solve them. Ford avoided the bailout that humbled its competitors and returned to profitability.¹

What Mulally built at Ford was organizational resilience, the ability for a company to absorb shocks, learn from failures, and adapt faster than the environment is changing. It is the same characteristic every American company now needs to develop, because AI has accelerated innovation and transformation.

Industry disruption is occurring weekly. Companies with a fragile culture that has to assign blame in order to learn cannot learn fast enough to compete.

This article gives you the framework to understand what is about to happen to your team, a tool to measure where you stand, and a plan for what to do Monday morning.

What AI Is Actually Going to Do to Your Team

When a company adopts AI in a meaningful way, three things change at once.

The tools your team uses change, sometimes every few weeks.

The work itself changes, because tasks that took hours now take seconds and tasks that used to be impossible become routine.

The question of who does what changes, because some of the work will be done by software, while new kinds of work will appear that no one on your team has done before.

That is the test your company is now facing. The deciding factor is whether your culture can match the pace of adaptation the technology requires.

Resilience Is a Set of Behaviors. Act Accordingly.

When most leaders hear the word resilience, they think of grit, perseverance, or a positive attitude. The research on how organizations actually survive crisis tells a different story. Resilience is something teams do, made up of specific behaviors you can watch happening on any given Tuesday afternoon.

Two researchers, Karl Weick and Kathleen Sutcliffe, spent decades studying organizations that operate in genuinely high-stakes environments and almost never fail catastrophically. Nuclear aircraft carriers. Air traffic control towers. Hospital emergency rooms. They wanted to understand why these organizations, despite handling situations where one mistake can have fatal consequences, have remarkably few catastrophic failures.

What they found is that resilient teams share a set of habits they called mindful organizing.

The teams pay attention to small problems before they become big ones.

They share information openly.

They treat the person closest to a problem as the expert on it, regardless of rank.

They assume failure is always possible and design their conversations accordingly.²

Fragile teams do the opposite.

They suppress small problems hoping those problems will resolve themselves.

They report progress in ways that protect their reputations rather than reflect reality.

They route every decision through hierarchy.

They assume that past success means future success, and stop watching for the failure modes they have not yet experienced.

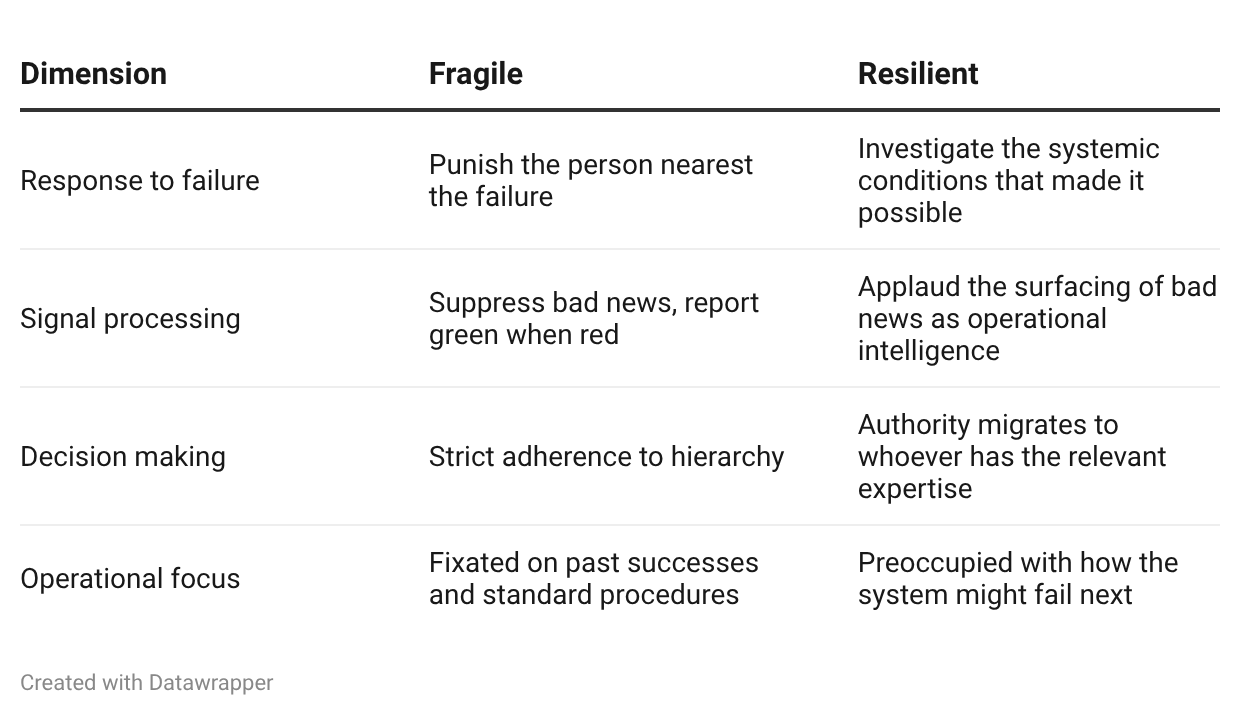

The difference between the two shows up in four places.

There is a phrase people inside fragile companies use to describe the way bad news gets hidden. They call it watermelon reporting 🍉. Green ✅ on the outside, red ❌ on the inside.

The status report says everything is on track. The reality is that the project is failing, and everyone close to it knows. The leadership above does not know, because the culture has trained the people below them that surfacing the truth is dangerous.

In resilient organizations, the working assumption is different.

When something goes wrong, leadership starts by asking what conditions made the mistake reasonable from the perspective of the person who made it. Failures are treated as evidence that the system has a flaw, not that a person has a flaw. That single shift in assumption is what makes telling the truth rational instead of risky.³

The same logic shows up at Etsy, the online marketplace. Under engineering leader John Allspaw, Etsy deployed new code to its live website dozens of times every day. That pace would be impossible in a company where engineers feared being blamed for outages. Allspaw made a deal with his team. If an engineer who caused a problem explained exactly what they did and why it seemed like the right call at the time, the company would not punish them. In return, the engineer was responsible for helping the company learn from the mistake so it would not happen again. As Allspaw put it, engineers were on the hook for making the system safer.⁴

Blamelessness makes high standards possible.

Why AI Makes This Test More Urgent

For most of business history, the changes that hit a company arrived slowly enough that even fragile cultures could survive them. A bad year was followed by a good year. Mistakes had time to surface. The cost of hiding bad news was real but manageable, because there was usually time to recover from the mess hidden bad news creates.

AI changes this in a specific way: it removes the time.

Here is what that looks like in practice. A team adopts an AI tool to handle customer service inquiries. The tool works well at first. Three months in, an employee notices that the tool is occasionally giving customers incorrect information about return policies.

In a healthy culture, the employee mentions it in the next team meeting, the team investigates, and they fix the underlying issue before any customer is harmed. The fragile version of this story ends with a regulatory complaint, a class-action lawsuit, or a viral social media incident, all tracing back to the moment one employee noticed something was off and decided not to say.

That gap between noticing and saying is what fragile cultures produce. AI makes that gap catastrophic, because the speed at which AI tools change means that a problem hidden today will compound into a much larger problem within months, not years.

The research backs this up.

A 2025 study in the journal Strategy and Leadership looked at companies adopting AI and found something important: the technology by itself does not make a company more resilient. What matters is whether the company already has the cultural ingredients in place, specifically a culture of innovation, an ability to move quickly, and leadership that understands the change. Companies with those ingredients found that AI made them stronger. Companies without them found that AI exposed every weakness their culture already had.⁵

A separate study found that when employees feel anxious about whether AI will eliminate their jobs, they start hiding what they know from their coworkers, because they believe their unique knowledge is what protects their position. That hoarding behavior makes the team worse at solving problems exactly when the team needs to be solving problems faster.⁶

The pattern is consistent.

Healthy cultures absorb AI and grow. Unhealthy cultures absorb AI and crack. The technology amplifies whatever was already there.

There is one more signal worth paying attention to inside your own company. Notice who gets celebrated. In fragile organizations, the heroes are the people who work eighty-hour weeks to put out fires. In resilient organizations, the heroes are the people who notice systemic flaws before they become fires.

Whichever your company actually rewards tells you which kind of company you actually run, regardless of what the values poster says.

A Tool for Measuring Your Team’s Readiness

So how do you know which kind of culture your team has? The good news is that researchers have already built a tool for exactly this question.

It is called the Mindful Organizing Scale, developed by Timothy Vogus and Kathleen Sutcliffe (the same Sutcliffe who studied high-reliability organizations).⁷ It is a short, validated assessment that measures the specific behaviors that separate resilient teams from fragile ones. The scale has been tested across high-stakes environments like hospitals and traditional enterprise workplaces, and it consistently produces reliable results.

Here is what makes it useful for the AI moment we are in. The scale was originally built to measure how teams handle high-stakes complexity, the kind of environment where small failures can cascade quickly. AI integration creates exactly that kind of environment inside ordinary companies. The scale is a nine-question instrument, and each question turns out to map directly onto a specific way that AI breaks fragile organizations.

Take the Assessment

Rate each question from 1 to 5, where 1 means strongly disagree and 5 means strongly agree. Answer based on how your immediate team actually operates, not how you wish it operated. The honest answer is the only useful answer.

When new problems come up, we talk with coworkers about what to watch for.

We spend time naming the work we cannot afford to get wrong.

We talk about different ways to do our normal work.

We know what each person on the team is good at.

We share our specialized skills so everyone knows who to go to.

In a crisis, we quickly combine what we each know to solve it.

We talk about mistakes and what they teach us.

When something goes wrong, we talk about how to prevent it next time.

When someone raises a problem, we look for a bigger pattern instead of treating it as a one-off.

Add up your scores. The total will fall between 9 and 45.

38 to 45: Highly Resilient.

Your team treats anomalies as useful information, defers to whoever has the relevant expertise, and views failures as opportunities to improve the system. You are positioned to absorb AI disruption and turn it into an advantage.

28 to 37: Transitional.

Your team has some resilient habits but falls back on hierarchy or blame when under real pressure. You may have the agility to react to AI-driven change, but you lack the safety to learn from it as fast as the technology requires.

9 to 27: Fragile.

Communication is siloed, bad news is suppressed, and failure is treated as an individual flaw. The pace of AI-driven change will cause significant fracture and burnout in your team within the next year or two unless leadership intervenes now.

The Real Diagnostic Is the Gap Between Scores

Your individual score matters. What matters more is what happens when every member of your team takes the assessment independently and you compare the results.

If individual scores vary by more than eight to ten points across your team, that gap is the real signal. It means psychological safety is not distributed equally. Almost always, leaders score the team higher than the frontline does. The leaders see a team that talks about problems openly. The frontline sees a team that has learned to perform openness in front of leadership and tell the truth elsewhere.

That gap is exactly what AI disruption exploits. The bad news is happening. The signals are arriving. The frontline sees them. Leadership does not, because the culture has trained the frontline that surfacing them is unsafe.

A fragile culture in 2006 was a slow-motion problem. A fragile culture in 2026 is an acute one.⁸

What to Do Monday Morning

Send the assessment to every member of your team. Have them complete it independently. Aggregate the results without identifying individuals. Compare your own score to the team’s average.

If the gap is meaningful, that is the conversation that matters more than the score.

Then bring the team together and use these three prompts to debrief.

Think of the last time a major project failed or missed its target. Was the immediate focus on who caused it, or what systemic factors allowed it?

If an entry-level employee spotted a flaw in an AI implementation plan designed by a vice president, how comfortable would they be raising the issue in a team meeting?

What is the unwritten rule on our team about delivering bad news to leadership?

The answers will tell you what Mulally figured out the week he started clapping for red slides. The information you need is already inside the building. The only question is whether you have built a culture where it can reach you in time.

Appendix: How Each Question Predicts an AI-Specific Failure Mode

Walking through the questions one at a time shows why this assessment is the right tool for this moment.

Question 1: When discussing emerging problems with co-workers, we usually discuss what to look out for.

AI tools produce warning signs before they produce outright failures. A customer service AI starts giving subtly wrong answers in unusual cases. An automated decision tool begins making choices that drift outside what it was designed for. A workflow tool quietly degrades the quality of an output without anyone noticing for weeks.

Teams that habitually talk about what to watch for catch these warning signs early. Teams that do not catch them only after the failures have stacked up.

Question 2: We spend time identifying activities we do not want to go wrong.

The most expensive AI failures happen in places where no one was watching, because no one named those places as critical in advance. Imagine a hospital that deploys AI to help with patient scheduling. If the team identified billing accuracy as a critical activity that cannot fail, they will check the AI’s effect on billing carefully. If they did not name it as critical, they will discover the billing problem from an angry insurance company months later.

Teams that map out what cannot fail before they deploy AI catch problems quickly. Teams that deploy AI broadly without that mapping discover their critical paths only after those paths have already broken.

Question 3: We discuss alternatives as to how to go about our normal work activities.

This is the question that most directly predicts how a team will handle AI integration. A team that rigidly defines work as a single fixed process cannot absorb AI-driven workflow change. When the new tool changes how the work gets done, the team experiences it as disruption rather than adaptation.

A team that habitually discusses alternative ways to do its work has already built the cognitive flexibility AI integration requires. The AI transition feels like a continuation of how the team already operates.

Question 4: We have a good map of each person’s talents and skills.

AI redistributes work based on what humans add that machines cannot. Some routine tasks your senior people do today will be done better by AI within a year. Some work that requires judgment, context, or creativity will become more important.

If you know your people deeply, you can reassign work intelligently as the technology absorbs the routine. If you operate on title and seniority alone, you end up with senior people doing work AI has now made obsolete, while junior people with the relevant judgment go underused.

Question 5: We discuss our unique skills with each other so that we know who has relevant specialized skills.

When an AI deployment surfaces a problem no one anticipated, someone on the team usually has the specific knowledge to solve it. Whether that knowledge gets to the problem in time depends on whether the team already knows who has it.

Teams that have made their expertise visible to each other route problems to the right person quickly. Teams that have not waste critical hours discovering capabilities they already had.

Question 6: When a crisis occurs, we rapidly pool our collective expertise to attempt to resolve it.

The defining feature of AI disruption is speed. The pace of new model releases, capability shifts, and downstream changes to how work gets done outruns the response capacity of slow-moving organizations.

Teams that pool expertise quickly survive the speed. Teams that route every decision up through hierarchy find that their decisions arrive after the window for them has already closed.

Question 7: We talk about mistakes and ways to learn from them.

Early AI deployments fail often. The error rate of new tools, new workflows, and new handoffs between humans and AI is high by design, because the systems are still being calibrated to the specific company. This is normal and expected.

Teams that openly discuss mistakes turn each failure into useful information that improves the next iteration. Teams that hide mistakes accumulate hidden problems that compound until something serious breaks.

Question 8: When errors happen, we discuss how we could have prevented them.

This question separates teams that learn forward from teams that learn defensively. Forward learning produces actual changes to how the work gets done. Defensive learning produces blame and the appearance of accountability without any real change.

AI accelerates this distinction because the same kinds of errors repeat across deployments. A team that examines prevention systematically gets better with every error. A team that just assigns fault keeps making the same kinds of errors with each new tool.

Question 9: When someone brings up a problem, we look for a larger pattern rather than dismissing it as a one-off event.

This is the highest-leverage question in the AI context. AI problems almost never arrive as isolated events. A single bad output is usually a symptom of something larger: a misaligned prompt, a flawed integration with an existing system, an issue with the data the AI was trained on, or a workflow assumption that no longer holds in the new environment.

Teams that look for the pattern catch the underlying problem early. Teams that dismiss the report as a one-off keep paying for the same hidden failure across dozens of downstream incidents.

The nine questions, taken together, measure whether a team can sense, route, learn from, and adapt to fast-moving change. AI is the highest-frequency change most companies have ever faced. A team that scores high on this instrument is built for exactly the kind of pressure AI creates. A team that scores low is not. The score does not tell you whether your team can adopt the technology. It tells you whether your team can survive the integration.

Appendix: References

¹ Hoffman, Bryce G. American Icon: Alan Mulally and the Fight to Save Ford Motor Company. New York: Crown Business, 2012.

² Weick, Karl E., and Kathleen M. Sutcliffe. Managing the Unexpected: Sustained Performance in a Complex World. 3rd ed. Hoboken, NJ: John Wiley & Sons, 2015.

³ Dekker, Sidney. The Field Guide to Understanding ‘Human Error’. 3rd ed. Farnham, UK: Ashgate Publishing, 2014.

⁴ Allspaw, John. “Blameless PostMortems and a Just Culture.” Code as Craft (Etsy engineering blog), May 22, 2012. https://www.etsy.com/codeascraft/blameless-postmortems.

⁵ “Artificial Intelligence and Organizational Resilience: The Mediating Role of Agility, Innovation, and Digital Leadership.” Strategy & Leadership. Emerald Publishing, 2025. https://www.emerald.com/sl/article/doi/10.1108/SL-08-2025-0275.

⁶ “How Artificial Intelligence-Induced Job Insecurity Shapes Knowledge Dynamics: The Mitigating Role of Artificial Intelligence Self-Efficacy.” Journal of Innovation & Knowledge, 2024. https://www.sciencedirect.com/science/article/pii/S2444569X2400129X.

⁷ Vogus, Timothy J., and Kathleen M. Sutcliffe. “The Safety Organizing Scale: Development and Validation of a Behavioral Measure of Safety Culture in Hospital Nursing Units.” Medical Care 45, no. 1 (2007): 46–54.

⁸ Edmondson, Amy C. The Fearless Organization: Creating Psychological Safety in the Workplace for Learning, Innovation, and Growth. Hoboken, NJ: John Wiley & Sons, 2018.

Additional Background Reading

Roberto, Michael A. Why Great Leaders Don’t Take Yes for an Answer: Managing for Conflict and Consensus. 2nd ed. Upper Saddle River, NJ: Pearson FT Press, 2013.

Taleb, Nassim Nicholas. Antifragile: Things That Gain from Disorder. New York: Random House, 2012.

Wildavsky, Aaron. Searching for Safety. New Brunswick, NJ: Transaction Publishers, 1988.

A note on sources. The Mulally narrative draws on Bryce Hoffman’s reporting from inside Ford during the turnaround. Hoffman covered Ford for the Detroit News from 2005 onward and was granted unprecedented access to Mulally, Bill Ford, the Ford family, senior executives, and internal company documents during the writing of American Icon. The story has been retold in many secondary sources, but the specific dialogue and scene details trace back to Hoffman’s primary reporting.